Google’s guide covers foundational best practices, addresses misconceptions that have gained traction in the AEO and GEO communities, and includes a section on emerging agentic experiences. For anyone following the growing body of advice around AI search optimization, several sections are worth reading closely.

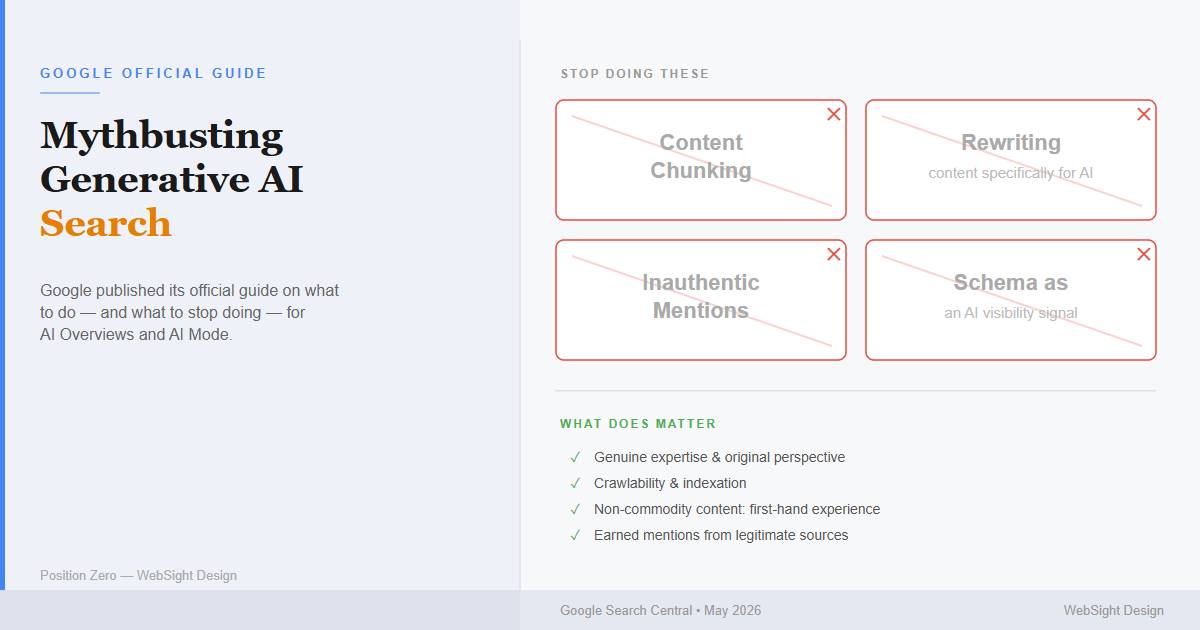

What Google Says to Stop Doing

A notable portion of the guide is dedicated to tactics Google considers unnecessary or counterproductive for its search systems, under the heading “Mythbusting generative AI search.”

llms.txt files. Google states that website owners do not need to create machine-readable files, AI text files, or Markdown documents to appear in generative AI search. The company notes it may discover and index those files, but does not treat them as special signals for ranking or AI feature inclusion.

Content “chunking.” Google states there is no requirement to break content into smaller segments to help AI systems process it. According to the guide, Google’s systems are capable of understanding multiple topics within a single page and surfacing the relevant portion in response to a query. The company advises that page length should be determined by audience and subject matter, not by assumptions about how AI reads content. Google’s Danny Sullivan reinforced this in January 2026, stating on the Search Off the Record podcast that he had spoken with engineers who specifically recommended against chunking as an optimization tactic.

Rewriting content specifically for AI. The guide states that AI systems can understand synonyms and general meaning without requiring content to be written in a particular style. Google says website owners do not need to worry about capturing every long-tail keyword variation or phrasing a query might take.

Inauthentic “mentions.” Pursuing manufactured brand mentions across third-party blogs, forums, and discussion sites — sometimes promoted as a way to increase AI citation frequency — is flagged as a potential spam policy violation. Google notes that its generative AI features rely on the same quality signals and spam filters as the rest of its search systems.

Treating schema.org markup as an AI signal. Structured data is not required for generative AI search, and Google says there is no special markup that improves AI visibility. The guide recommends continuing to use structured data as part of a broader SEO strategy, primarily for rich result eligibility, but cautions against treating it as an AEO-specific lever.

What the Guide Says Does Matter

On the positive side, Google’s recommendations align closely with established SEO principles, with an emphasis on content quality as the primary driver of AI visibility.

The guide draws a distinction between what it calls “commodity” and “non-commodity” content. Commodity content — general advice, aggregated tips, or information that restates what is widely available — is positioned as less likely to perform well in AI-generated responses. Non-commodity content, defined as material that offers genuine expertise, first-hand experience, or a perspective not easily replicated elsewhere, is described as the stronger long-term investment.

What actually separates brands that get cited from brands that do not is whether the content itself says something worth citing.

On the technical side, the guide confirms that a page must be indexed and eligible to appear in standard Google Search results before it can be included in AI features. Crawlability, page experience, JavaScript SEO practices, and duplicate content management all remain relevant factors.

Google also explains the mechanism behind its AI features: retrieval-augmented generation (RAG), which grounds AI responses in content retrieved from the Search index using the same core ranking signals that govern traditional search results. The implication is that AI Overviews and AI Mode are extensions of the existing search system, not a separate optimization target.

On query fan-out — the process by which Google’s AI generates multiple related queries to assemble a response — the guide acknowledges the technique but warns against creating large volumes of pages designed to target every possible variation. That approach is described as a violation of Google’s scaled content abuse policy.

On the AEO and GEO Labels

Google addresses the terminology question directly. The guide acknowledges that “AEO” (answer engine optimization) and “GEO” (generative engine optimization) are terms in active use, but argues that since its generative AI features are built on its core search ranking systems, optimizing for them is still fundamentally SEO.

WebSight Design’s Take

Google’s guidance is clear and well-documented for Google Search specifically. For businesses focused solely on Google AI visibility, the message is straightforward: create genuinely useful content and skip the AI-specific hacks.

The ‘AEO and GEO as segments of SEO’ position holds within the context of Google Search. It is a narrower framing than what many practitioners mean when they use the term AEO, which typically encompasses optimization across AI platforms that operate independently of Google’s index, including ChatGPT, Perplexity, Claude, and other AI platforms. For businesses focused on brand representation across all major AI surfaces, the discipline involves considerations that go beyond what standard SEO practices cover.

The part of this guide we agree with most strongly is the emphasis on content quality as the dominant factor — not markup, not technical structure, not schema, not any file you place at the root of your domain. All of that stuff matters and is part of a broader strategy, but content is always king. A well-structured page with thin content will lose to a plainly formatted page with genuine expertise every time, across every AI platform we test.

On rewriting content for AI: we agree. Remember the old SEO joke: how many SEO managers does it take to change a lightbulb, lamp, incandescent, LED, lantern, floodlight? We do not need an AI version of that. Content that reads naturally and addresses a topic with genuine depth consistently outperforms content rewritten to hit specific phrasing targets. Writing for the question behind the query, rather than the exact words of it, is the more durable approach.

Does paying for brand mentions actually work for AI visibility?

On inauthentic mentions: we agree here too. Paying for fake brand placements across random blogs and forums to game AI citations is the kind of shortcut that sounds clever, but in the wild west of SEO we have already been here before. Short term gains do not mean long term sustainability, especially if you get dropped from the results for “black hat AEO”.

Mentions from legitimate sources are what has weight. Real press, genuine reviews, and actual citations are what AI platforms are picking up on. Fabricated ones won’t stick, and now they carry spam policy risk on top of it. It’s not worth it. Getting dropped by Google does not just hurt you on Google. Most AI platforms pull from the web, and the web Google indexes is a big part of what they are reading. Get penalized there and you lose visibility across the board. When the biggest player in the room sets a spam policy, it is worth paying attention.

On chunking: the industry is not fully aligned with Google on this one. Some practitioners argue that clear, well-structured content with descriptive headings and focused paragraphs — which is what “chunking” often produces in practice — improves AI extractability regardless of intent. The distinction Google appears to be drawing is between structuring content well for human readers (good) and deliberately fragmenting content to game AI retrieval (unnecessary). Writing clearly organized content for your audience will naturally produce well-structured pages. Doing it specifically to manipulate AI chunking behavior is where Google pushes back, and here we agree as well, just like rewriting content for AI, content should always be “chunked” (AKA paragraphs) for the human to read, regardless of how many bots we also want to read it as well.

Google’s deprecation of FAQ rich results in August 2023 makes the same point from a different direction — the Q&A format that was once the go-to chunking tactic for rich snippets is no longer something Google rewards. On ChatGPT and Perplexity the picture is more nuanced: question-structured H2 and H3 headings do correlate with higher citation rates, but the reason appears to be that they help readers navigate content quickly — and AI retrieval follows from that. The difference between good structure and bad chunking is intent. Write headings as questions because your readers scan that way. If the bots also benefit, that is a bonus, not the point.

Google’s position on schema is consistent with what we found in our own research. Our analysis of schema markup and AI citations found that schema accounts for roughly 10% of citation factor weighting across AI platforms — content quality and domain authority account for an estimated 70%. Google is essentially confirming what the controlled experiments already showed. The technical work matters at the margins. The content is the thing.

Where it gets more complicated is outside Google’s walls. Google’s position that AEO is just SEO holds within their ecosystem — but ChatGPT, Perplexity, Claude, and other AI platforms operate independently of Google’s index, and the signals that drive visibility there are not the same. A Google-centric SEO approach covers part of the multi-platform AI visibility problem, not all of it.

Is llms.txt actually worth adding to your site?

On llms.txt specifically: Google calls it unnecessary, and for Google Search that is accurate. The picture elsewhere is more interesting. The clearest documented use case is developer tooling — LangChain shipped an open-source MCP server that exposes their llms.txt files directly to IDEs, and Cursor, Windsurf, and Claude Code all fetch documentation through it in real time. For AI coding assistants reading your docs, the format works as intended. For AI search crawlers deciding whether to cite your website, no major platform has confirmed it affects citation behavior. But the landscape is constantly changing, and what is not any good today might find a use tomorrow. The llms.txt is a low-effort implementation but it is not worth anchoring a strategy to.

Sources

- Optimizing your website for generative AI features on Google Search, Google Search Central

- The Schema Myth: What AI Platforms Actually Use to Decide What to Cite, WebSight Design

- Google doesn’t want you to create bite-sized chunks of your content, Search Engine Land

- llms.txt Files and mcpdoc Server Launch for LangChain and LangGraph, LangChain

- LLMs.txt: Why Brands Rely On It and Why It Doesn’t Work, SE Ranking

- FAQ structured data documentation (deprecated), Google Search Central

- ChatGPT Citations Study: 44% of Citations Come From First Third of Content, Search Engine Land

- Query Fan-Out: How Google AI Generates Multiple Queries to Answer a Single Search, Ahrefs

- Google Search spam policies: Scaled content abuse, Google Search Central

- Google Search spam policies: Link spam, Google Search Central