Schema markup has become the default technical recommendation for anyone trying to improve their visibility in ChatGPT, Perplexity, and Google AI Overviews. Implement FAQPage schema and AI will understand your content better. It sounds credible. It travels fast. And two separate research studies now suggest it is largely wrong.

What the Experiments Show

In early 2026, SEO consultant Mark Williams-Cook ran a controlled test to establish whether LLMs actually parse JSON-LD as structured data, or just read it as text. He created a fictional company and embedded its address inside invalid, made-up schema markup, with no visible text equivalent on the page.

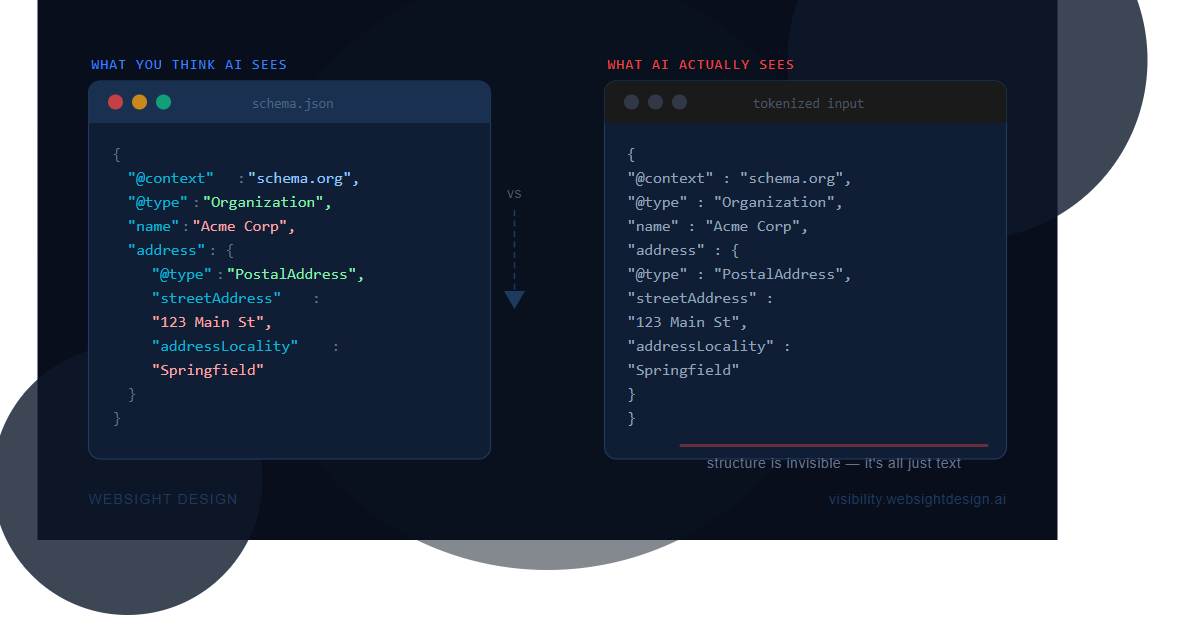

Both ChatGPT and Perplexity returned the address. Not because they understood the schema, but because they read the script block as raw text, the same way they read everything else. The @type declarations, the property relationships, the structured hierarchy — none of it registered. The models saw a block of text that happened to contain an address.

LLMs tokenise JSON-LD as plain text. The structured relationships you build into schema are not relationships the model sees.

Search Atlas reached a similar conclusion from a different angle. Their December 2024 study compared schema coverage against citation rates across OpenAI, Gemini, and Perplexity. Domains with comprehensive schema coverage performed no better than domains with minimal or no schema across all three platforms.

Where the Correlation Comes From

Some studies do show a positive correlation between schema presence and AI visibility. Ahrefs offered the most coherent explanation: sites that implement schema tend to be better-maintained and higher-quality overall. The schema is not driving citations. Site quality is driving both the schema adoption and the citations independently.

One genuine exception exists. Microsoft’s Fabrice Canel stated at SMX Munich in March 2025 that schema helps Microsoft’s LLMs understand content. That is an on-the-record platform statement and worth acknowledging. It is also the strongest direct evidence in favour of schema for AI, which says something about how thin the supporting case is elsewhere.

What Actually Matters

The research across platforms points consistently to a different set of signals. Content quality and domain authority account for an estimated 70% of citation factor weighting. Schema accounts for roughly 10%. The practical priorities, in order of impact:

- Content quality and topical authority. The single highest-leverage factor. A well-written, credible page on an authoritative domain outperforms technically optimised content on a weak one every time. AI systems have processed enough of the web to distinguish credible from thin, regardless of how the thin content is marked up.

- Recency. ChatGPT cited content published within 30 days at an 82% rate in 2026 analysis. Perplexity’s window is even tighter. AI tools that retrieve live web content are especially sensitive to publication and update dates. Keeping content current is a higher-leverage activity than adding schema to static pages.

- Question-structured headings. H2 and H3 headings written as questions help AI systems extract your content cleanly. This is the kernel of truth inside the FAQ advice. It comes from the visible heading structure, not from schema layered on top. Write the heading — you do not need the markup to get the benefit.

- Primary data and named sources. Citing research, naming authors, and linking to original data signals credibility to the models deciding what to surface. Original data that does not exist elsewhere is particularly high-value, because the AI has no alternative source to prefer.

The Bottom Line

Schema is not useless. It still has value for traditional search rich results and maintaining a technically sound site. But if your AI visibility strategy is anchored to schema implementation, you are optimising heavily for something that accounts for roughly 10% of what matters, while the factors that actually move the needle get less attention.

The schema advice is a good example of a pattern that repeats itself in SEO: a technically plausible claim, a few correlational data points, and an industry that repeats it before the controlled experiments catch up. They have now started to.

Sources

- ChatGPT and Perplexity Treat Structured Data As Text On A Page, Search Engine Roundtable

- The Limits of Schema Markup for AI Search: An Empirical Analysis, Search Atlas

- How schema markup fits into AI search, without the hype, Search Engine Land

- How LLMs Interpret Content: Structure Information for AI Search, Search Engine Journal

- How ChatGPT, Perplexity, Gemini, and Claude Actually Decide What to Cite, Yext